OpenTelemetry Cost: 7 Reasons It Increases After Rollout (And How to Reduce It)

After rolling out OpenTelemetry in production, many teams are surprised when OpenTelemetry cost increases instead of stabilizing. What begins as a visibility initiative can quickly expand into high-cardinality metrics, trace storage growth, and ingestion spikes.

Understanding why OpenTelemetry cost increases after rollout — and how to reduce OpenTelemetry cost intentionally — requires architectural discipline, not just instrumentation.

Below are the 7 most common reasons OpenTelemetry cost rises in production environments, along with practical ways to control it.

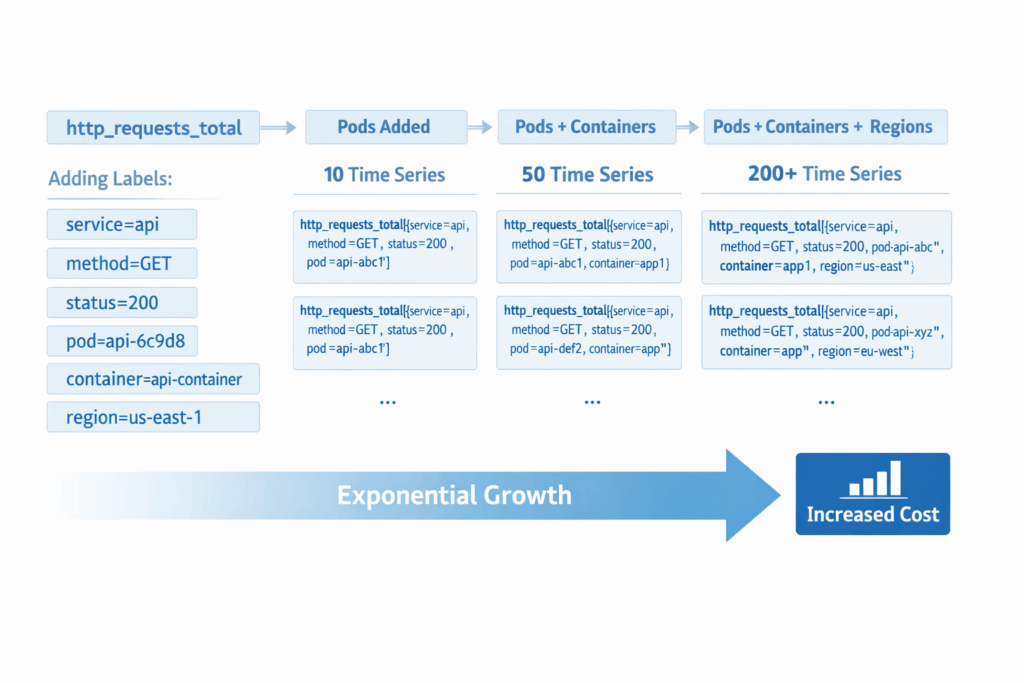

1. High-Cardinality Metrics Explosion

High-cardinality metrics are the most common reason OpenTelemetry cost increases after rollout.

Labels such as user_id, session_id, request_id, dynamic Kubernetes metadata, or tenant identifiers multiply the number of unique time series. Each additional label dimension increases storage and indexing requirements.

Why This Increases OpenTelemetry Cost

Most observability backends price metrics based on the number of active time series. When labels are unbounded, the number of series grows non-linearly — driving ingestion, storage, and query cost upward.

How to Reduce This Cost

- Remove unbounded identifiers from metric labels

- Avoid dynamic labels tied to users or sessions

- Audit high-cardinality dimensions regularly

- Define metric label standards before rollout

Cardinality discipline is foundational to OpenTelemetry cost control.

2. Unbounded Trace Sampling

Tracing is powerful — and expensive.

Many teams begin with 100% sampling to gain visibility. Without head-based or tail-based sampling strategies, trace volume grows rapidly.

Why This Increases OpenTelemetry Cost

Trace storage is often billed by ingestion volume or retained spans. Capturing every request — especially low-value endpoints — increases cost without improving reliability insight.

How to Reduce This Cost

- Implement head-based sampling early

- Use tail-based sampling for error-focused traces

- Reduce sampling for non-critical services

- Monitor trace ingestion rate continuously

Intentional sampling significantly reduces OpenTelemetry cost while preserving signal quality.

3. Over-Instrumentation Without Defined Use Cases

It’s easy to instrument “everything.”

Metrics, logs, spans, attributes — often emitted without clear operational purpose.

Why This Increases OpenTelemetry Cost

Telemetry that isn’t tied to reliability outcomes becomes storage overhead. Collecting signals “just in case” leads to exponential telemetry growth.

How to Reduce This Cost

- Tie telemetry to SLOs and service objectives

- Remove metrics not used in dashboards or alerts

- Limit verbose debug-level spans in production

- Audit unused signals quarterly

Visibility without intent increases cost without increasing clarity.

4. Retention and Storage Misalignment

Retention policies are often overlooked during rollout.

High-resolution metrics and traces may be stored longer than operationally necessary.

Why This Increases OpenTelemetry Cost

Storage accumulates quietly. If retention isn’t tiered or aligned with use cases, long-term storage becomes one of the largest contributors to OpenTelemetry cost.

How to Reduce This Cost

- Define retention tiers by signal type

- Reduce high-resolution metric retention windows

- Store full traces only for critical services

- Align retention with compliance requirements

Retention discipline is as important as ingestion discipline.

5. Collector Misconfiguration and Retry Amplification

The OpenTelemetry Collector is powerful — but misconfiguration can increase cost.

Over-aggressive retry policies, duplicate exporters, and inefficient batching can amplify ingestion volume.

Why This Increases OpenTelemetry Cost

Retries and duplicated exports inflate backend ingestion. Multiple exporters sending identical telemetry also double cost silently.

How to Reduce This Cost

- Audit collector retry configurations

- Verify exporters are not duplicating signals

- Tune batching and queue settings

- Monitor ingestion anomalies

Collector architecture should be intentional, not default-driven.

6. SLO-Independent Telemetry Collection

Many environments collect telemetry without tying it to reliability outcomes.

SLOs may exist — but signals aren’t aligned to them.

Why This Increases OpenTelemetry Cost

Signals that don’t support SLO measurement or alerting become noise. Telemetry volume grows without improving reliability decisions.

How to Reduce This Cost

- Align metrics to defined SLOs

- Use burn-rate alerts instead of raw threshold alerts

- Remove signals not mapped to reliability objectives

- Focus on error-budget-driven visibility

OpenTelemetry cost decreases when telemetry supports decisions.

7. Backend Pricing Model Blind Spots

Even well-designed telemetry can be expensive if pricing models aren’t understood.

Backends may charge by:

- Active time series

- Ingestion volume (GB)

- Trace spans

- Data retention duration

Why This Increases OpenTelemetry Cost

Teams often underestimate how quickly telemetry growth interacts with vendor pricing tiers. Cost increases may not be visible until billing cycles complete.

How to Reduce This Cost

- Understand your backend’s pricing model

- Monitor ingestion and series counts proactively

- Forecast cost before scaling services

- Revisit pricing assumptions quarterly

Cost transparency prevents surprise escalation.

How to Reduce OpenTelemetry Cost in Production

Reducing OpenTelemetry cost requires architectural guardrails, not reactive cleanup.

A sustainable strategy includes:

- Cardinality governance policies

- Defined sampling strategies

- Retention tier alignment

- SLO-driven signal design

- Continuous ingestion monitoring

- Periodic telemetry audits

OpenTelemetry itself is not inherently expensive. Undisciplined telemetry design is.

Frequently Asked Questions About OpenTelemetry Cost

Why does OpenTelemetry cost increase after rollout?

OpenTelemetry cost increases due to high-cardinality metrics, full trace sampling, over-instrumentation, retention misalignment, and ingestion growth that outpaces design guardrails.

How can I estimate OpenTelemetry cost?

Estimate OpenTelemetry cost by analyzing time series counts, trace ingestion rates, retention windows, and backend pricing models. Monitor these continuously as services scale.

Does OpenTelemetry reduce monitoring costs?

OpenTelemetry can reduce vendor lock-in, but it does not automatically reduce cost. Cost efficiency depends on sampling strategy, cardinality control, and retention policies.

Is OpenTelemetry more expensive than vendor-native agents?

Cost depends on telemetry design, not just the tool. Poorly controlled telemetry can make any backend expensive.

Final Thoughts

If your team is experiencing rising OpenTelemetry cost or increasing alert noise after rollout, the issue is rarely the tooling itself. It is almost always a signal design and governance issue.

Focused telemetry discipline can significantly reduce OpenTelemetry cost while improving reliability clarity.

If you want an external review of your current OpenTelemetry architecture and signal design, you can schedule a focused discussion below.